Automated SEO Traffic Bot

SEO is no longer just about backlinks and keywords. Google’s algorithms now reward user signals — click-through rates, bounce rates, session duration, and engagement depth. But how do you optimize these metrics without waiting months for organic traffic data?

That’s where our automated traffic bot for SEO benefits comes in.

More than just a traffic software

Ranking Analytics

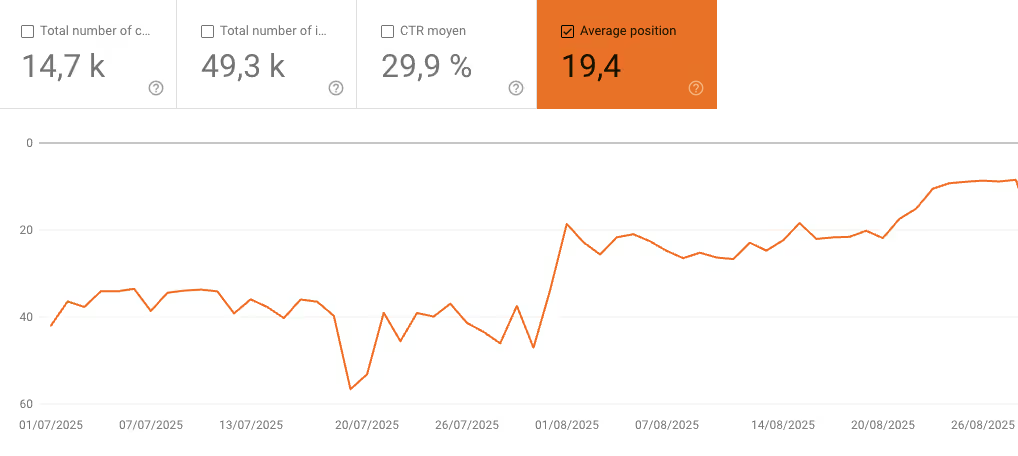

✅ Actionable analytics that reveal how optimization changes impact your ranking

Geo Targeted traffic

✅ Controlled, realistic simulations that mimic organic behavior

Automated Clicks

✅ White hat tools that strengthen SEO signals with real traffic from SERP

Session duration

✅ User metrics that boost your website core metrics to rank faster

Best IPs selection

✅ Ethical SEO automation designed to really boost your SEO thanks to the best IPs on the market

Scalable Automated Traffic

✅ Scalable traffic — from small tests to enterprise campaigns

Increase Website Traffic with Smart Automation

Simulate Real Visitors for Testing

With our increase website traffic bot, you can generate consistent, controlled visits that act like real users. Sessions include natural scrolling, multi-page navigation, and variable durations — the kind of engagement Google notices.

This allows you to:

- Stress-test your website before major campaigns or launches

- See how different user journeys impact bounce rate and session time

- Prepare for real traffic surges without surprises

Automated Website Visits for SEO Insights

Our automated website visits SEO functionality lets you design traffic flows that reflect real user behavior. Want to test whether visitors read blog content or drop off at the homepage? You can simulate it.

Every visit becomes an experiment, giving you data-driven insight into how your site will perform once organic visitors arrive in larger volumes.

Rank Boost Bot Traffic: Improve Engagement Signals

Google looks at more than just backlinks. It pays attention to how users interact with your site. That’s why engagement signals like bounce rate, CTR, and session depth play a critical role in rankings.

Improve Bounce Rate with Automation

A high bounce rate tells search engines that visitors don’t find value on your site. With our improve bounce rate bot, you can simulate sessions that navigate across multiple pages, scroll through content, and engage with your site as real users would.

The result? You can identify weak spots, restructure pages, and reduce bounce rate naturally for your real audience.

Boost CTR with Automated Testing

Click-through rate from the SERP is a massive ranking signal. With our automated click-through rate (CTR) bot, you can test different meta titles and descriptions by simulating clicks from search results.

This isn’t manipulation — it’s market research at scale. By knowing which variations earn more clicks, you refine your listings to attract genuine searchers more effectively.

Gain Rank Boost Bot Traffic Insights

Controlled automation reveals which changes actually drive results. Instead of guessing, you’ll have evidence-backed strategies to improve visibility and climb search rankings.

Website Bot Traffic Analytics: Insights Beyond Numbers

Traffic is meaningless without interpretation. That’s why our system comes with advanced website bot traffic analytics.

You’ll see:

- 📊 Engagement depth: How far simulated visitors travel into your site

- ⏱️ Session duration: How long sessions last across different content types

- 🔀 Navigation paths: The most common click flows users take

- 🌍 Geographic diversity: How your site performs with global IP traffic

With this level of granularity, you can:

- Separate valuable engagement signals from noise

- Detect anomalies or risks from suspicious traffic sources

- Optimize content and UX for stronger organic performance

Ethical SEO Automation: Stay Ahead, Stay Safe

The words bot traffic Google penalty scare many marketers. And for good reason: manipulative black-hat bots put your site at risk.

But here’s the truth: automation is not the problem — misuse is.

White Hat SEO Bot Tools

We focus on ethical SEO automation. Our tools are designed for:

- Performance testing

- CTR optimization experiments

- Engagement signal simulations

- Analytics and diagnostics

These practices align with responsible SEO — giving you the insight edge without risking penalties.

Learning from Black Hat SEO Bots

We also understand how black hat SEO bots work — and that makes our solution even more powerful. Instead of using them to manipulate rankings, we use that knowledge to build defenses and craft strategies that keep your site safe, stable, and compliant.

Rethinking Fake Traffic SEO Impact

You’ve heard the warnings: “fake traffic” doesn’t help SEO. And that’s true — if it’s random and meaningless.

But what if you reframed fake traffic SEO impact as simulated traffic? Suddenly, the story changes.

Simulated visits help you:

- Run controlled experiments before investing in full campaigns

- Predict how real users will interact with your site

- Spot performance gaps before they cost you rankings

It’s not about “tricking” search engines. It’s about optimizing smarter, faster, and safer.

Key Features of Our SEO Traffic Generator Software

Our platform is designed to give you full control and flexibility.

- 🌍 Global IP diversity: 87M+ IPs across 170+ countries

- ⏱️ Custom session duration: Choose how long visitors stay

- 📄 Multi-page navigation: Simulate deep site exploration

- 🔍 CTR simulation: Test meta titles & descriptions

- 📈 Analytics dashboard: Monitor traffic & engagement in real-time

- ⚡ Scalable plans: From small businesses to enterprise-level needs

Who Uses Our Automated Traffic Bot?

- SEO Agencies: Test campaigns before rolling out to clients

- E-commerce Stores: Optimize conversion paths for real buyers

- Content Publishers: Refine engagement strategies for articles and blogs

- Enterprises: Stress-test infrastructure for traffic surges

If you’re serious about SEO, automation isn’t optional anymore, it’s a competitive advantage.

Get Trusted Organic Website Traffic

Reach the Google 1st Page

From search results to your site, our traffic that truly boosts rankings

Plans for Every Site

Monitor key performance metrics, identify trends, and optimize your marketing efforts with AI-powered analytics