What Is a Traffic Bot and How Does It Really Affect SEO in 2026

At its core, a traffic bot is just a piece of software built to act like a human visitor on a website. It automates clicks, page views, and browsing sessions, creating a stream of artificial activity. This ghost traffic can be used for anything from harmlessly testing a server to committing serious click fraud.

What Exactly Is a Traffic Bot?

Think of your website's analytics as the dashboard of a ship, guiding every decision you make. Now, imagine someone is deliberately messing with the controls, feeding you false readings that send you sailing straight for an iceberg. That’s exactly what a traffic bot does to your business.

These automated scripts are programmed to land on your site and mimic human behavior, creating the illusion of a busy, engaged audience. But it's a hollow victory. A real visitor might read your blog, sign up for a newsletter, or buy a product. A bot’s visit is completely empty—it contributes nothing of actual value.

The Scale of the Problem

The internet isn't what it used to be. It’s no longer a place dominated by people. In fact, by 2026, bots are on track to generate a shocking 51% of all global web traffic, officially outnumbering human users.

The real trouble comes from malicious "bad bots," which already make up 37% of total traffic. For an SEO agency or an e-commerce brand, this bot tsunami can warp analytics by a staggering 50-83% in some cases, throwing all your key business metrics into chaos. You can see more data on this bot-driven internet over at webscraft.org.

This reality forces every marketer and business owner to ask some tough questions:

- Can I even trust my analytics? If a huge chunk of your traffic is fake, your entire understanding of user behavior is built on a lie.

- What is this costing my business? Decisions based on bot-inflated numbers lead directly to wasted ad spend, flawed content strategies, and misallocated resources.

- Is this hurting my SEO? Search engines are designed to spot and penalize low-quality engagement, making bot traffic a direct threat to your hard-earned rankings.

A traffic bot creates a "hall of mirrors" effect in your analytics. It reflects an image of success—high traffic, lots of page views—but behind the reflection, there's nothing of substance. Your real audience and business goals get lost in the noise.

Ultimately, learning to spot and sidestep bot traffic isn't just a good idea; it's essential for survival. This guide will walk you through the different kinds of automated traffic and show you how to protect your site. For a closer look at the tools out there, you might also want to check out our deep dive into various traffic bot software.

How Different Types of Traffic Bots Operate

Traffic bots aren't all cut from the same cloth. To get a handle on the problem, you have to understand that they range from laughably simple scripts to scarily sophisticated programs designed to act just like a real person.

Think of it this way: the most basic bot is like a ghost that just opens your shop's front door and leaves. It triggers the bell—registering a page view—but does nothing else. A more advanced bot, however, can actually walk the aisles, look at products, and maybe even put something in a shopping cart before vanishing into thin air. Each level of complexity leaves a totally different footprint in your data.

The Foundation: Simple Scripts and Proxy Networks

At the bottom of the barrel, you have the simple script. This is the most basic kind of traffic bot, doing little more than making a direct request to your server to load a page. That's it. It creates a single, low-quality page view. It’s the digital version of a "ding-dong ditch"—it’s fast, noisy, and incredibly easy for analytics platforms to spot.

Of course, these bots don't want to get caught, so they hide their origins using proxy networks. A proxy acts as a go-between, masking the bot's real IP address. They usually come in two flavors:

- Datacenter Proxies: These are IPs from commercial data centers like AWS or Google Cloud. They're cheap, fast, and you can get them in bulk, but they’re also a dead giveaway. A sudden flood of traffic from a known datacenter IP range is a massive red flag.

- Residential Proxies: These IPs are assigned to real home internet connections, making them look like legitimate household visitors. Because of this, they are much, much harder to detect and far more expensive.

Clever bot operators will mix and match these proxies to make their traffic look more random. But at the end of the day, the underlying behavior is still just a simple script. It’s like a thief wearing a different disguise for every robbery—the clumsy method is still the same.

The Mid-Tier: Headless Browsers and Spoofing

On the next rung up the ladder, you’ll find bots using a headless browser. Picture a web browser you know, like Chrome or Firefox, but strip away all the visual parts—no windows, no buttons, no address bar. You’re left with just the engine that renders websites behind the scenes.

This is a game-changer because it allows the bot to execute JavaScript, load images, and interact with page elements, just like a real person's browser would. Suddenly, it can trigger much more than a simple page view.

A headless browser lets a bot graduate from just knocking on the door to actually walking inside and looking around. It can simulate scrolling, fill out forms, and spend time on a page, making it far more convincing than a basic script.

To complete the disguise, these bots also practice user-agent spoofing. A user agent is just a little text string your browser sends to identify itself (like "Chrome on Windows 11"). Bots can easily fake this information to pretend they’re coming from any browser, device, or operating system imaginable, which further muddies the analytical waters.

The Most Advanced Bots

The most sophisticated bots are programmed to mimic the subtle, almost random behaviors of a real human. We’re talking about things like:

- Mouse Movements: Simulating the natural, slightly jerky path a real user’s cursor takes as it moves across the screen.

- Realistic Dwell Time: Staying on a page for a varied and believable amount of time, not just a fixed 15 seconds.

- Click Paths: Navigating through multiple pages on your site in a sequence that actually makes sense.

But here's the thing: even with all these tricks, the best traffic bot eventually shows its hand. They operate with a cold, mathematical precision that humans just don't have. They can’t fake genuine interest, unpredictable curiosity, or the emotional drivers that lead to a real conversion. This fundamental lack of intent is exactly why they ultimately fail to deliver any real value for your SEO or your business.

The Real Cost of Fake Traffic to Your Business

Trusting analytics tainted by a traffic bot is like trying to navigate a dense fog with a faulty GPS. Your dashboard might tell you you're speeding along, but you’re actually driving straight into a ditch. Fake traffic creates a dangerous illusion, reflecting phantom success that leads to some truly terrible business decisions.

It all starts with your data. Inflated session counts, bizarre bounce rates that are often stuck at 0% or 100%, and a complete lack of conversions paint a misleading picture of your site's performance. Every metric you rely on to build your strategy becomes worthless, turning your analytics platform from a trusted guide into a source of misinformation.

This corrupted data has a direct and painful financial impact, especially if you’re running paid ads. Picture this: you launch a pay-per-click (PPC) campaign and see a huge spike in clicks. Thinking you've struck gold, you pour more money in. In reality, you're just paying for bots to visit your site, bleeding your marketing budget dry for zero return.

Wasted Resources and Strained Infrastructure

The costs go well beyond just wasted ad spend. Every single visit to your website, whether from a human or a bot, eats up server resources. A flood of automated traffic can easily overwhelm your hosting infrastructure, slowing down your site for real customers or, in a worst-case scenario, crashing it completely.

This means you could be paying for a beefier, more expensive hosting plan just to accommodate an army of fake visitors. It's a digital traffic jam that not only frustrates genuine users but also inflates your operational costs for no good reason.

The true cost of fake traffic isn't just the money you burn on ads. It's the missed opportunities, the flawed strategies you chase, and the valuable time you lose hunting for ghosts in your data. It's a silent business killer disguised as engagement.

The rise of AI has thrown gasoline on this fire. The explosion of AI and search crawler bots in 2026 has become a massive headache for site owners. AI bots alone now account for 4.2% of HTML requests in an environment where bots make up 51% of all traffic. This automated frenzy breaks sites, skews SEO data, and crushes organic reach as publishers watch their Google traffic disappear. You can find more insights on these web crawling stats and see how they're affecting businesses.

The Hidden Damage to Your SEO

Perhaps the most serious long-term damage from traffic bots is to your search engine optimization (SEO). Search engines like Google are smarter than ever, using sophisticated algorithms to gauge user experience. They don’t just count visits; they analyze what people do during those visits.

When Google sees a ton of traffic hitting your site with awful engagement signals—like an instant bounce or zero time on page—it concludes that your site offers a poor user experience. A traffic bot is a machine built to send exactly these kinds of negative signals, over and over again.

Here's how that plays out:

- Sky-High Bounce Rates: Bots visit one page and leave immediately. This tells Google your content failed to meet the searcher's needs.

- Zero Dwell Time: When sessions last just a few seconds, it signals that your page is boring or irrelevant.

- No Real Conversions: High traffic with no goal completions (like sign-ups or sales) shows that your site isn't providing any real value.

Over time, these signals convince search engines that your website isn't a helpful resource. The result? Your rankings can plummet, making your site invisible to the real human audience you’re trying to reach. You’re left with inflated vanity metrics and a collapsing organic presence—the worst of both worlds.

To see the difference clearly, let's break down how bot signals stack up against real human behavior.

Impact of Bot Traffic vs Real Human Traffic on Key Metrics

The table below starkly contrasts the digital fingerprints left by low-quality bots versus authentic human visitors. This is precisely what search engines and analytics platforms see, and it directly influences how they perceive the value of your website.

| Metric | Traffic Bot Signal | Authentic Human Signal | Impact on Business |

|---|---|---|---|

| Bounce Rate | Artificially low (0%) or extremely high (100%). | Varies naturally based on content and user intent. | Skewed bounce rates make it impossible to judge content quality or landing page effectiveness. |

| Session Duration | Near-zero, typically 1-3 seconds. | Varies widely, often several minutes for engaged users. | Convinces search engines your content is unengaging, leading to lower rankings. |

| Pages per Session | Almost always 1. The bot visits a single page and leaves. | Typically 2+ as users explore the site. | Lack of internal navigation signals a dead-end user experience and low site value. |

| Goal Conversions | Zero. Bots don't buy products or fill out contact forms. | Varies, but a healthy site sees consistent conversions. | You get a false sense of traffic volume while generating zero revenue or leads. |

| Traffic Source | Often appears as "Direct" or from suspicious referring domains. | Comes from diverse, legitimate sources (Organic, Social, Referral). | Corrupts your channel performance data, leading to poor marketing investment decisions. |

As you can see, the two types of traffic tell completely different stories. Bot traffic creates a pattern of shallow, valueless interactions that actively harm your site's reputation, while human traffic shows the rich, meaningful engagement that builds a healthy business.

How to Detect and Analyze Bot Traffic on Your Website

Think of yourself as a digital detective. Your website's analytics is the scene of the crime, and a traffic bot is the intruder who always leaves clues behind. To keep your data clean and trustworthy, you need to learn how to spot these tell-tale signs and filter out the automated noise from your real human users.

The first and most obvious clue is often a sudden, unnatural spike in traffic. If you're looking at your analytics and see a massive surge of visitors overnight—without any new marketing campaign, press mention, or viral post to explain it—you should be suspicious. It’s a major red flag, especially if that traffic all comes from a single, unexpected geographic location where you have no real customer base.

Finding the Clues in Your Analytics

To start the investigation, you need to dive into Google Analytics (or your platform of choice) and look for behavior that just doesn't make sense for a human being. Bots operate with a cold, robotic precision that real people simply don't. Getting good at detecting bots and spam in Google Analytics is crucial for making sure the data you rely on is actually clean and actionable.

Here are the key metrics to put under the microscope:

- Extreme Bounce Rates: Keep an eye out for traffic segments with a bounce rate of exactly 100% or 0%. A 100% bounce rate means every "visitor" landed on a single page and left immediately—a classic signature of a simple bot. A perfect 0% is just as weird and often points to a sophisticated bot firing off multiple pageview events to seem engaged.

- Impossible Session Durations: Pay close attention to sessions lasting 0-1 seconds. No real person can even load and process a webpage in that amount of time. A high number of these zero-second visits is a dead giveaway that low-quality bots are just hitting your site and bouncing instantly.

- Suspicious Referral Sources: Your referral report can be a goldmine of evidence. Scan for traffic coming from shady or unknown domains. If you spot referrals from sites like "get-free-traffic-now.com" or a long list of gibberish domains, you've almost certainly found a source of bot activity.

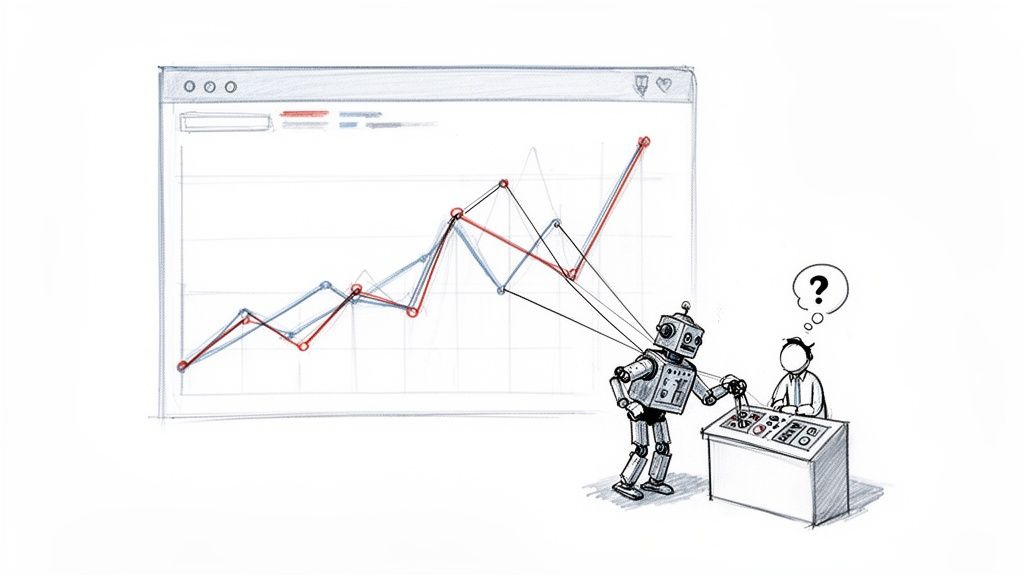

This infographic breaks down how this fake traffic starts a chain reaction that distorts your data and ultimately hurts your business.

As you can see, the damage flows from the bot itself, contaminates your data, and ends up eroding your ability to make smart business decisions.

Digging Deeper with Technical Signals

Beyond the obvious engagement metrics, bots often give themselves away with their technical fingerprints. These details are the concrete evidence you need to confirm your suspicions and start cleaning things up.

Head over to the "Technology" or "Audience" reports in your analytics and look for unnatural patterns in these areas:

- Browser and Operating System: Is a huge chunk of your traffic coming from an ancient browser version, like Chrome 35? Or from a server-based OS like "Linux"? That's highly unusual for a typical audience of real people.

- Screen Resolution: Look for bizarre or completely uniform screen resolutions. A sudden flood of visits from a tiny resolution (like 800x600) or thousands of sessions from the exact same one points to automated scripts running in a controlled environment.

- Network Hostname: Most bots are run from commercial data centers. If your reports show hostnames that include terms like "amazon-aws," "google-cloud," or "ovh," you can be almost certain it’s not a real person browsing from their couch.

The secret to spotting bots is looking for a lack of randomness. Human behavior is messy and varied—we use different devices, browsers, and network connections. Bots are uniform and repetitive. That uniformity is their biggest giveaway.

For those who are more technically inclined, analyzing your server logs is the most direct way to catch a traffic bot. Your server logs record every single request, and by digging into them, you can spot things analytics might miss—like thousands of requests from a single IP address in just a few seconds. That’s not a fast reader; it’s a script.

By combining these behavioral and technical clues, you can build a strong case against bot traffic. Once you’ve identified the culprits, you can start setting up filters to clean your data and finally get a true picture of your website’s performance. For a more automated defense, you might look into dedicated tools like those covered in our review of bot detection services.

Why Traffic Bots Are a Dead End for Sustainable SEO

Using a traffic bot for SEO is like building a house on a foundation of sand. Sure, it might look like you've built something impressive for a moment, but the whole thing is destined to come crashing down. This entire strategy is flawed because it completely misses the point of what search engines like Google actually value.

Google has poured billions into an algorithm designed to understand and reward genuine human engagement. It doesn't just see a "click." It analyzes the complex, subtle signals that show a real person found something useful. Bots simply can't fake these signals well enough to matter in the long run.

The Inability to Mimic Real Engagement

A bot can be programmed to land on a page, but it can't truly replicate the behavior of a curious human. Think about what you do when you find a great article. You might slow down to re-read a compelling paragraph, click over to a related post, or maybe even come back a few days later when you need that information again.

A traffic bot, on the other hand, just runs a cold, predictable script. Its movements are robotic and lack the nuanced, sometimes random, patterns of genuine interest. This leaves a trail of obvious red flags that modern algorithms are specifically trained to spot:

- Meaningless Dwell Time: A bot can sit on a page for 60 seconds, but it’s not actually doing anything. Google's systems are smart enough to tell the difference between someone actively reading and interacting versus a script just keeping a tab open.

- Illogical Navigation Paths: Bots often follow rigid, unnatural click paths that no real person would. Their journey through a site looks scripted because it is, immediately betraying their automated nature.

- No Return Visits: Happy users come back. Bots almost never do. This tells Google that your site isn't memorable or valuable enough to earn a second look from a real visitor.

Chasing SEO shortcuts with bots is a major distraction from the only work that truly matters: creating real value for actual human beings. You're trying to game a system that is literally built to reward authenticity.

Google's Policies and Inevitable Penalties

Putting aside how ineffective they are, using automated traffic is also a clear violation of Google's rules. Google’s policies explicitly forbid any "automated programs or services to create clicks." When they catch you—and they will—the penalties can be severe enough to completely tank your website's visibility.

Getting caught doesn't just mean you'll slip a few spots in the rankings. The fallout can be catastrophic, from a massive ranking drop for all of your keywords to complete de-indexing, which means your site is scrubbed from Google's search results entirely. Worse, some malicious bots can lead to serious problems like SEO poisoning, which can permanently tarnish your site's reputation.

At the end of the day, a traffic bot is a high-risk, zero-reward gamble. It gives you a fleeting illusion of activity while actively poisoning your site with negative signals that will get you penalized. Real SEO success is a marathon, not a sprint. There are no shortcuts, and the only winning strategy is to earn your rankings by being the best possible resource for a real human audience.

A Smarter Alternative: Human-Powered CTR Optimization

So, if using a traffic bot is a dead end for any serious SEO strategy, what's the alternative? How can you genuinely improve the user engagement signals that Google clearly values? The answer isn't to give up on improving user signals, but to completely rethink how you get there.

The smart, sustainable approach uses a distributed network of real human beings to generate authentic engagement. This is the polar opposite of a bot’s empty, automated actions. We’re talking about creating legitimate data that proves to Google your page is a valuable and relevant result for a specific search query.

The Power of Authentic Human Clicks

Services like ClickSEO are built on an entirely different foundation. Instead of deploying automated scripts, they tap into a massive network of real people. These aren't bots in a data center; they're individuals using their own devices, complete with unique residential and mobile IP addresses, performing real searches.

The process is designed to mirror the exact behavior Google wants to see and reward:

- A real person types your target keyword into Google.

- They scroll through the search results and find your website.

- They click on your link, sending a clear signal to Google that your result was the most compelling.

- Most importantly, they then spend real time on your page, actually engaging with the content.

This creates a cascade of positive, trustworthy signals. You see an improved click-through rate (CTR), longer average session durations, and a lower bounce rate—all driven by genuine human interest, not a predictable script. To get a better handle on this key metric, you can read our complete guide on what click-through rate is and why it’s so critical for rankings.

Why Human Engagement Will Always Outperform a Bot

The magic is in the authenticity. A real person’s behavior is messy, varied, and nuanced. They might scroll at different speeds, get distracted and click on an internal link, or pause to read a paragraph that catches their eye. That randomness is impossible for any traffic bot to fake convincingly.

A bot can simulate a visit, but only a human can generate true engagement. Search engines are designed to tell the difference, rewarding the latter while penalizing the former. This is the dividing line between a risky shortcut and a sustainable SEO strategy.

This human-powered approach gives you the best of both worlds. You can strategically boost the engagement metrics that influence your rankings without ever touching the risky automation that violates Google's guidelines. It’s a method that focuses on showing search engines exactly what they want to see: that real people are searching for your keywords, finding your site, and liking what they see.

By leaning into genuine human interaction, you build a foundation for lasting SEO success. This helps your pages climb the rankings and, in turn, attract even more organic traffic. It’s about reinforcing your value in the eyes of search engines, one authentic click at a time.

Frequently Asked Questions About Traffic Bots and SEO

Let's cut through the noise. Here are the straight answers to the questions we hear all the time about traffic bots and their place in SEO.

Can a Traffic Bot Actually Help My SEO Rankings?

Let’s be blunt: no. A traffic bot will do more harm than good for your SEO in the long run.

Sure, it can make your page view numbers shoot up, but that's just a vanity metric. Search engines are far too smart for that. They see the real story behind the numbers—like 100% bounce rates and session durations that last less than a second—and correctly interpret it as a terrible user experience. That's a huge red flag that will only sink your rankings over time.

Is Using a Traffic Bot Against Google's Rules?

Yes, absolutely. It's a clear violation of Google's Webmaster Guidelines. Their policies specifically forbid any kind of automated traffic generation that's designed to manipulate search results.

Don't mistake this for a gray-area tactic. Using a traffic bot is a classic black-hat method that puts your entire website on the line. Penalties can be severe, from getting pushed to page 10 to being completely de-indexed from Google.

Google considers this spam, plain and simple. They actively hunt down and penalize sites that do it to protect the integrity of their search results.

What Is the Difference Between a Traffic Bot and a Web Crawler?

This is a great question. While both are automated programs, their purpose and behavior couldn't be more different.

- Web Crawlers: These are the "good bots," like Googlebot. Their job is to discover and index pages for search engines. They are the reason your site can even show up in search results, and they typically follow the rules you set in your

robots.txtfile. - Traffic Bots: These are usually "bad bots" built to fake human activity. Their goal is to generate artificial engagement signals, often for malicious or manipulative reasons, and they usually ignore website rules while hogging your server's resources.

Think of a web crawler as a helpful librarian, carefully cataloging every book so people can find what they need. A traffic bot is like someone paying a crowd to run into the library, pull books off the shelves, and leave immediately, creating chaos and signaling to the librarian that the place is a mess.

Can Analytics Tools Like Google Analytics Detect Bot Traffic?

Yes, but it's not a perfect system. Google Analytics has a built-in filter to screen out known bots and spiders, but the more sophisticated traffic bots are designed to get around it.

These advanced bots can use residential IP addresses and mimic human mouse movements and clicking patterns, making them much tougher to catch automatically. That’s why you have to be the real detective. Keep an eye out for those tell-tale signs: sudden traffic spikes from odd locations, nonsensical engagement metrics, and referral sources that don’t add up. Simply ticking the "bot filtering" box isn't enough to keep your data clean.

Ready to improve your SEO with real, human engagement instead of risky bots? ClickSEO drives authentic clicks from real users in the SERPs to boost your rankings safely and sustainably. Start your free trial today and see the difference.