CTR Booster: Safe Strategies for SEO in 2026

You know the situation. A page is well written, technically clean, internally linked, and already indexed. It still sits on page two or drifts around the lower half of page one while weaker competitors pull more clicks.

That usually means the problem is not only content quality. It is often a user signal gap. Google can see which listings get chosen, how people behave after the click, and whether that result seems to satisfy intent.

A ctr booster exists to influence that layer. Used carefully, it can help a page or local listing look more relevant because more searchers appear to choose it and engage with it. Used carelessly, it can create patterns that look manufactured. The difference matters.

Stuck on Page Two? Understanding the CTR Booster

Your page is already visible. It picks up impressions, sits just outside the top results, and still loses clicks to listings that are not clearly better. That is the point where a ctr booster usually enters the discussion.

A ctr booster is a tool or service designed to influence search behavior signals around a specific query and URL. The goal is simple. Get more believable searchers to choose your result, spend time on the page, and behave in a way that supports the page's relevance for that keyword.

The important distinction is authenticity. A human-driven campaign and a bot-driven campaign may both increase clicks, but they do not create the same footprint. One can resemble normal search behavior closely enough to shift performance. The other often leaves patterns that are easier to spot and easier for Google to discount.

What problem it is trying to solve

Many pages stuck in positions 6 through 20 already have the basics in place. The page is indexed, the topic matches the query, and the site has enough authority to compete. What they lack is stronger evidence that searchers prefer that result over the options around it.

A ctr booster tries to strengthen that evidence on pages that already have ranking potential. It does not create relevance from nothing. It tries to reinforce relevance that is already close to breaking through.

The same principle applies in local SEO. Search activity, listing interactions, calls, and direction requests can influence how prominent a business appears, but only when the underlying business profile is already credible and competitive.

What it is not

A ctr booster works best as a layer on top of solid SEO, not as a replacement for it.

Weak title tags, thin pages, poor internal linking, slow load times, and weak local signals still need to be fixed first. If the snippet is unappealing or the page fails intent after the click, added activity usually produces unstable gains. If you need a baseline refresher, start with this explanation of what click-through rate is and why it matters.

Practical takeaway: Use ctr boosting on pages that already earn impressions and have a realistic path to move up. If a page is poorly matched to the query, fix the page before you try to influence behavior around it.

An accurate evaluation is not just "is CTR manipulation risky?" The better question is which method creates signals that look authentic enough to help, and which method creates patterns that put the page or listing at risk. That difference drives everything that follows.

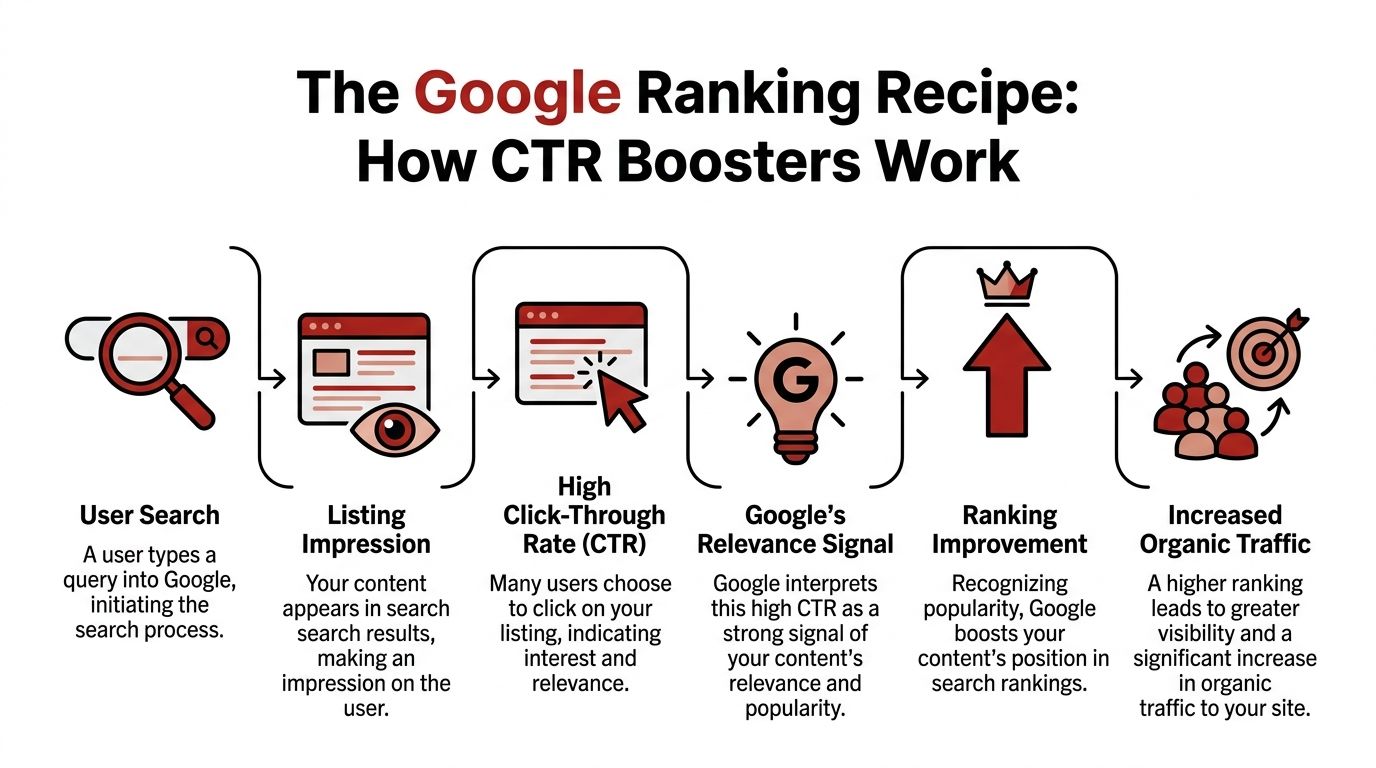

How CTR Boosters Influence Google Rankings

Google does not rank pages by reading text alone. It also observes what searchers do.

Think about two restaurants on the same street. One is full, people stay, and more customers keep walking in. The other is empty. Even if both menus look similar, the busy restaurant sends a stronger real-world signal. Search results work in a similar way. A listing that attracts clicks and keeps people engaged can look more useful than one that gets ignored.

The signal stack Google can observe

A ctr booster is built around the idea that search engines treat behavior as evidence.

The important signals are not just the click itself. They include what happens after the click:

- Selection from the SERP: Your result gets chosen over nearby competitors.

- Dwell behavior: The visitor stays long enough to suggest the page matched intent.

- Page depth: The user visits additional pages instead of leaving immediately.

- Return patterns: The session looks like a normal search experience rather than a robotic hit.

That is why crude traffic inflation rarely works well. A click with no believable follow-through is weak. A click paired with natural browsing is much stronger.

CTR momentum and why it matters

The useful term here is CTR momentum.

According to MapRanking’s explanation of ctr booster mechanics, ctr booster tools operate by simulating geo-targeted, high-intent user actions that establish CTR momentum, a compounding engagement signal that accelerates ranking. The same page states that the architecture uses configurable intensity tiers and that case studies showed notable ranking lifts within 30 to 60 days.

That compounding effect is what practitioners care about. Once a page starts getting chosen more often, it can gain slightly better placement. Better placement can create more impressions and more clicks. That can reinforce the initial lift.

Why local SEO responds so visibly

Local results are especially sensitive to this because the search journey is short and intent is high.

A person searching for a nearby service does not usually browse for an hour. They search, compare a few options, click, call, request directions, or visit a website. If a business appears to win that interaction pattern repeatedly, it can look more prominent.

For local campaigns, the behavioral architecture often includes:

| Signal type | Why it matters |

|---|---|

| Clicks on the listing | Indicates immediate relevance |

| Calls or direction requests | Suggests commercial intent |

| Website visits from branded or service terms | Adds post-click context |

| Geo-targeted search behavior | Makes the signal align with the local market |

This is also why random traffic from the wrong geography tends to be useless. Search behavior has to match the keyword, the location, and the likely intent behind that query.

What works and what does not

What works is consistency. The most credible campaigns build a believable pattern over time.

What does not work is brute force. Sudden bursts, irrelevant locations, and paper-thin sessions create noise instead of trust. If you want the broader SEO rationale behind this, why SERP clicks impact SEO connects the click itself to ranking behavior in a useful way.

Key point: A ctr booster influences rankings by improving the quality and quantity of observed engagement around a search result. The click opens the door. The post-click behavior makes it believable.

Bots vs Human Clickers The Two Types of CTR Boosters

Not all ctr booster systems create the same kind of signal.

In practice, there are two broad models. One uses automated bots. The other uses human-powered networks. They may chase the same outcome, but they do it in very different ways, and the trade-offs are not small.

How bot-based ctr boosters work

Bot-driven systems are software-led. They try to mimic real visitors by controlling browsers, devices, locations, and session behavior.

The technical side is more advanced than many buyers expect. CTRBooster describes this software model as often running on Windows-based architecture, usually with real browser and device identification, residential proxy rotation, session controls, and even “pogo stick” search patterns that try to resemble normal browsing. The same source notes that this traffic can register in analytics tools as legitimate sessions.

That stack usually includes:

- Residential proxies: Used to vary origin points and avoid obvious source repetition.

- Browser fingerprint simulation: Makes sessions look less uniform.

- Configurable dwell and navigation: Lets the operator set time on page and internal clicks.

- Campaign concurrency: Multiple domains, URLs, or keywords can run at once.

Bot systems are attractive because they are scalable and highly configurable. They are also the easiest to misuse.

How human-powered networks differ

Human-powered networks take the opposite route. Instead of simulating human behavior with software, they ask real people to perform the search and click behavior.

The biggest advantage is signal authenticity. A real person on a real device with a real browsing environment creates fewer synthetic fingerprints than software trying to imitate one.

The downside is control. Human networks are usually less precise than bots in terms of exact session choreography. They can also be slower to ramp and harder to standardize at scale.

One option in this category is ClickSEO’s automated traffic bot overview, which discusses the distinction between automated traffic patterns and more authentic organic click behavior.

The decision table that matters

The right comparison is not speed versus price. It is signal authenticity versus synthetic control.

| Factor | Automated Bots | Human-Powered Networks |

|---|---|---|

| Traffic generation method | Software simulates user behavior | Real users perform searches and clicks |

| Environment control | High. Operators can configure dwell, page depth, and patterns | Moderate. Real behavior is less rigid |

| Signal authenticity | Lower than human traffic because the system is manufactured | Higher because real users create the sessions |

| Scale | Easy to scale quickly | Usually slower and more constrained |

| Pattern risk | Higher if setup is sloppy or too aggressive | Lower when geography and intent are well matched |

| Analytics visibility | Usually visible in analytics platforms | Also visible, often with more natural variation |

| Best use case | Testing, short bursts, controlled experiments | Longer-term campaigns where credibility matters more |

What usually fails in real campaigns

A lot of ctr campaigns fail because the operator buys the tool before making the strategic choice.

Common mistakes include:

- Choosing bots for fragile assets: A local listing or money page is not the place for reckless pattern generation.

- Forcing precision where realism matters more: Exact dwell times repeated across sessions look neat in a dashboard and odd in the wild.

- Ignoring geography: A local keyword needs local behavior, not broad untargeted traffic.

- Treating all keywords equally: Branded, local, and commercial queries do not deserve the same campaign logic.

A practical rule for choosing the method

If the page matters to revenue, lean toward the most authentic signal source you can get.

If the campaign is experimental, non-critical, or limited to controlled testing, bot systems may be useful. But the safer long-horizon approach is usually the one that minimizes synthetic fingerprints and mirrors how genuine searchers behave.

Rule of thumb: The more valuable the asset, the less tolerance you should have for artificial-looking behavior.

Evaluating CTR Manipulation Risks

Most discussions about ctr manipulation stop at one sentence: “It’s risky.”

That is not wrong. It is also not useful enough.

The core issue is that risk is not binary. It sits on a spectrum shaped by traffic source, pattern quality, geography, timing, and whether the session looks like something a normal searcher would do.

What we know and what we do not

This is the uncomfortable part. Hard public evidence on detection rates is thin.

Blue Things notes in its discussion of CTR manipulation risk that detection risk and Google penalty likelihood remain largely unquantified, and that no independent audits validate vendor safety claims or measure actual penalty exposure. That gap matters because agencies and consultants still have to advise clients despite the lack of clean external data.

So the honest position is this: the risk is real, but the exact probability is not well measured.

What likely triggers detection

Google does not need to prove intent the way a human reviewer would. It only needs to notice that a pattern does not fit normal search behavior.

The biggest red flags are usually qualitative:

Behavior that is too uniform

Sessions with identical timing, depth, or click order can look manufactured.Location mismatch

Traffic that does not fit the keyword’s market is hard to justify.Clicks without believable follow-through

If the search click happens but the engagement pattern feels empty, the signal weakens.Over-aggressive ramping

A campaign that jumps too fast can look less like growth and more like injection.

Why the method changes the risk

The method changes the risk, and the bots-versus-humans distinction becomes important.

Bot systems can reduce obvious footprints with proxies, device simulation, and browser controls. But they are still software-driven. If the operator pushes volume, repeats patterns, or uses weak infrastructure, the campaign can become easy to spot.

Human-powered systems generally lower risk because they start from real users, real devices, and naturally messy behavior. They are not risk-free. Poor targeting, bad keyword selection, or unnatural campaign pacing can still cause problems. But the core signal is less synthetic.

A better risk framework

Instead of asking “Is ctr boosting safe?” ask four better questions:

- How authentic is the traffic source?

- Does the behavior match the keyword and location?

- Is the pacing gradual enough to look earned?

- Would this session look normal if you removed the campaign label?

If the answer to any of those is no, risk goes up.

Risk mitigation starts before launch: choose the method first, the volume second, and the keyword set last. Most bad campaigns reverse that order.

The safest campaigns are restrained. They target keywords already showing real impressions, use believable geos, and avoid trying to force overnight dominance.

Evidence and ROI CTR Booster Case Studies

A client usually asks this question after the risk discussion: if we use a ctr booster carefully, what does the return look like?

The honest answer is that ROI varies less by the tool name and more by signal authenticity, keyword intent, and starting position. A human-driven campaign on a page already sitting near page one can produce measurable movement. A bot-driven campaign on a weak page can burn budget and create noise without changing revenue.

What the documented examples show

The useful case studies are the ones tied to commercial queries, not vanity terms. Earlier examples in this article pointed to local campaigns such as pest control, pressure tank repair, and auto body searches showing ranking improvement over periods of roughly one to two months. The exact percentages matter less than the pattern. These were high-intent searches where a better click profile could influence visibility fast enough to affect lead flow.

That distinction matters.

A movement from position 11 to 7 on a buyer-intent keyword can produce more business value than a larger jump on an informational query with weak conversion intent. This is why I treat ctr boosting as a ranking acceleration tactic, not a standalone growth strategy. It works best when the page already matches the search and needs stronger engagement signals to compete.

How to judge ROI without overstating the result

The right way to evaluate a ctr booster is to connect ranking movement to business outcomes and to separate authentic lift from temporary volatility.

Use a simple filter:

Keyword value

Does the query lead to calls, form fills, booked appointments, or product sales?Starting rank

Terms already ranking within reach usually offer better return than terms buried with little visibility.Signal authenticity

Human click campaigns tend to hold value better because the behavior looks like normal search activity. Bot campaigns can still move rankings, but they often carry more volatility and more monitoring overhead.Conversion path

A ranking gain has limited value if the landing page does not convert the traffic it earns.Durability

Watch whether the lift sticks after the campaign slows. Short spikes are less valuable than stable gains.

A short explainer helps show how practitioners think about the upside in real campaigns:

Where the strongest payback usually appears

The best returns usually come from pages with clear commercial intent and existing visibility.

| Page type | Why it responds |

|---|---|

| Local service pages | Search intent is immediate and easy to reinforce |

| Google Business Profile terms | Click, call, and direction behavior can align tightly |

| Product pages with existing impressions | They need stronger selection signals |

| Affiliate money pages | Small ranking gains can change revenue concentration |

The weaker returns usually come from pages with no traction, weak intent match, or poor offers. That is where teams misread CTR manipulation. They assume the tool failed, when the fundamental issue is that the underlying asset was never competitive enough to convert extra attention into lasting rankings or revenue.

Case studies are useful, but only if they are read with the method in mind. If the clicks come from real users, the upside is usually more believable and the risk is easier to manage. If the clicks come from software, ROI calculations need to include the extra operational risk, the chance of unstable gains, and the time required to keep patterns from looking synthetic.

Implementing Your First CTR Booster Campaign

A first ctr booster campaign should feel conservative, not clever.

Most ranking problems here come from impatience. Teams target too many keywords, send too much traffic, and create a pattern that looks manufactured before they learn how the page responds.

Start with pages that already deserve to win

Do not begin with your hardest keyword.

Start with pages or listings that already show signs of life in Search Console or rank tracking. The best candidates usually have impressions, some click history, and a clear match between keyword intent and landing page content. For local SEO, that often means service queries already associated with the business. For e-commerce, it usually means product or collection terms that are visible but underclicked.

A first campaign works best when:

The keyword is already ranking within reach

You are amplifying demand around an existing foothold.The landing page matches intent cleanly

Do not send transactional searches to a thin blog post.The title and snippet are worth clicking

If the SERP presentation is weak, boosting clicks becomes harder and less believable.

Configure behavior like a normal user would

Once the target is set, the campaign needs believable behavior.

That means choosing geo-targeting that fits the keyword, setting session duration that reflects real interest, and allowing enough page depth to avoid single-hit sessions. If the tool lets you control internal link clicks, use that feature sparingly and logically. A user who lands on a service page may visit contact, pricing, or location content. That is plausible. Five random clicks across unrelated pages is not.

When human-powered networks are available, they usually fit this stage better than software-driven traffic because the behavior comes with natural variation. One option in that category is ClickSEO, which lets users set daily organic clicks, geo targets, session length, and page depth around keyword-driven campaigns.

Pace matters more than volume

Volume is where beginners make the worst decisions.

A campaign should ramp in a way that mirrors growing interest, not an injection of synthetic attention. Low and slow is not a slogan. It is a pattern control strategy. It gives you time to see whether rankings move, whether CTR improves in Search Console, and whether the engagement profile looks normal.

Use a monitoring stack that includes:

Google Search Console

Watch impressions, clicks, average position, and query mix.Rank tracking

Follow day-to-day movement, especially on local terms.Analytics

Check whether session behavior still looks believable once the campaign runs.

Campaign discipline beats aggressive settings: if a page cannot improve with a restrained push, a larger push often makes the pattern worse, not better.

What to adjust during the run

Most campaigns need tuning after launch.

If rankings do not move, the issue may not be traffic volume. It may be weak relevance, poor SERP copy, or a keyword that is too competitive for the current page. If behavior looks off in analytics, simplify the session pattern. If local results are inconsistent, tighten the geo targeting.

A practical adjustment sequence looks like this:

- Review keyword-page fit.

- Check whether the query has enough existing impressions.

- Refine geo settings.

- Adjust dwell and page depth only if the current behavior looks unnatural.

- Increase volume last.

This order keeps you from using traffic as a substitute for strategy.

Audience-Specific Strategies and Your Next Move

A ctr booster is not one tactic. It is a lever. Different operators should pull it in different ways.

For SEO agencies and consultants

Client risk comes first.

Agencies should treat ctr boosting as a controlled intervention, not a default monthly line item. Use it on assets that already have traction, document the campaign logic, and set expectations around uncertainty. The main job is not only getting lift. It is protecting the account from reckless execution.

For e-commerce brands

Product and collection pages are the usual targets.

Focus on keywords with commercial intent and existing impressions. If the product page has poor images, weak pricing clarity, or thin copy, fix that before boosting. Better post-click behavior makes the campaign more believable and more profitable.

For local businesses

Local campaigns respond best when the geography is tight and the query is service-driven.

Keep the behavior aligned with how real customers search in your city or service area. The closer the search pattern matches actual local intent, the more credible the signal becomes.

For affiliate marketers and niche publishers

Do not spread clicks across the whole site.

Choose money pages that already rank and have clear intent. If the page does not hold attention on its own, boosted clicks will not save it. Strong comparison formatting, clearer intros, and better offer placement usually need to come first.

How to evaluate any ctr booster vendor

Before buying, ask questions that expose signal quality:

- What generates the clicks, software or real users?

- How is geography handled?

- Can behavior vary naturally across sessions?

- How do they recommend ramping volume?

- What do they say about risk when they cannot hide behind buzzwords?

The strongest answer is rarely the most aggressive one. It is the one that sounds most like normal search behavior.

A ctr booster can move rankings. The safer path comes from choosing authentic signals, limiting synthetic patterns, and applying the tactic only where the page already has a real chance to win.

If you want a practical way to test ctr improvement with real search behavior, ClickSEO offers a human-driven CTR optimization service built around keyword searches, organic clicks, dwell time, and page-depth controls. It fits best for teams that want to evaluate engagement-based ranking lifts while keeping the traffic pattern closer to real user behavior than a pure bot setup.