Fake Website Hits: Your Guide to Detection & Prevention

Your traffic chart looks healthy. Sessions are climbing. Pageviews are up. Maybe a campaign report even says performance is improving.

But the phone isn't ringing. Demo requests aren't moving. Sales stay flat.

That gap usually indicates where the true story starts.

I've seen this pattern on local business sites, e-commerce stores, affiliate properties, and SaaS landing pages. The dashboard says growth. The business says stall. When that happens, fake website hits move from abstract annoyance to active problem. They don't just clutter reports. They distort decisions.

A lot of site owners get stuck because the traffic isn't obviously fake. It doesn't always look like a flood of junk from one bad source. Sometimes it comes in neat little spikes. Sometimes it spreads evenly across pages no human would browse that way. Sometimes it imitates user behavior well enough to slip into analytics and poison the data you rely on.

The hard part isn't only spotting bots. It's learning to separate benign automation, malicious bot traffic, and manufactured human traffic from visitors who can buy, call, subscribe, or become customers. That's the difference between cleaning up noise and building an SEO strategy that can hold up over time.

The Unsettling Silence of Rising Traffic

Monday starts with a win. GA4 is up. Sessions climbed over the weekend. A few landing pages look busier than usual.

By the end of the week, the sales team has nothing new to show for it.

That pattern should make any site owner suspicious. Real demand rarely stays trapped inside analytics. It shows up somewhere else: more form fills, more calls, more cart activity, more branded searches, more conversations with support, or at least better engagement from the right pages. If traffic rises and every commercial signal stays flat, the visit count is often measuring noise instead of opportunity.

I see this most often when teams treat all traffic as equal. It is not. Some visits come from benign bots that support the web and should usually be left alone. Some come from bad bots that scrape, spam, probe, or inflate reports. Some come from manufactured human traffic, paid clicks or low-quality visit schemes designed to make a dashboard look active without creating real interest. If you want a quick reference point, this breakdown of traffic bot patterns and fake visit behavior is a useful place to compare what you are seeing.

The business risk is not limited to messy reports.

False traffic changes decisions. It can make a weak campaign look acceptable, hide channel problems, and send teams chasing the wrong fix. I have watched companies rewrite landing pages, swap offers, and question sales follow-up when the underlying issue was simpler: the visitors were never qualified, and in many cases were never genuine prospects at all.

Traffic without downstream signals creates false reassurance, which is as dangerous as the technical mess in your analytics. People keep funding channels that look busy. SEO priorities drift toward pages attracting worthless sessions. Conversion rates appear to collapse for no clear reason. Then the team starts solving a conversion problem that was a traffic quality problem.

That is why rising traffic can be the wrong kind of good news. The number goes up. Trust in the data goes down.

What Are Fake Website Hits Anyway

Fake website hits are visits that make your analytics look active without representing real customer interest. They usually fall into three groups: benign bots, bad bots, and manufactured human traffic. If you do not separate those groups, you end up treating indexing, fraud, and fake engagement as if they were the same problem.

The three buckets that matter

This classification is the one I use in audits because it keeps teams from making bad filtering decisions.

| Traffic type | What it is | Should you block it | Why it matters |

|---|---|---|---|

| Benign bots | Crawlers and automated systems performing legitimate tasks | Usually no | They support indexing, uptime checks, feed fetching, and other normal web functions |

| Bad bots | Automation built to scrape, spam, test logins, inflate metrics, or abuse systems | Usually yes | They waste budget, pollute reports, and increase security exposure |

| Manufactured human traffic | Real people or semi-assisted traffic sent to simulate engagement without true interest | Treat cautiously | It can look cleaner than bot traffic while still corrupting channel and SEO decisions |

Not all automation is a threat. Search crawlers, monitoring tools, and other approved systems are part of the web's normal operation. Imperva's bad bot reporting has shown for years that automated traffic makes up a large share of internet activity, which is why this distinction matters. A site with bot traffic is not automatically under attack.

What matters is intent.

What bad bot traffic does

Bad bots do not visit for the reasons a customer visits. They are built to collect data, manipulate signals, abuse forms, test weaknesses, or create the appearance of demand. In analytics, they often show up as short sessions, strange geographies, impossible engagement patterns, or sudden spikes tied to no genuine campaign activity.

Common examples include:

- Metric inflation that makes pageviews, sessions, or click activity look stronger than they are

- Content scraping from product pages, blog posts, category pages, or pricing pages

- Form and login probing used for spam, credential testing, or low-grade attacks

- Server hammering that wastes resources and can affect site performance

- Referral and campaign spoofing that causes teams to credit the wrong source

The lower end of this market is easy to find. Services selling traffic bots and fake visits make it clear how cheap it is to manufacture activity that looks real in a dashboard and means nothing in the pipeline.

Manufactured human traffic is a different problem

This category confuses people because the visitor may be a real person.

That does not make the traffic genuine.

Manufactured human traffic comes from paid visit schemes, click farms, incentivized traffic networks, or low-quality campaigns designed to copy engagement patterns without real demand behind them. The sessions may last longer than bot visits. They may scroll, click, and even trigger events. But they still send the same false signal to the business. Someone wanted the numbers to look healthy, not to solve a problem, compare options, or buy.

That distinction matters for SEO. Search performance improves when pages satisfy real intent, earn links, and create engagement that reflects actual usefulness. Fake visits, whether automated or human-assisted, do not create that foundation. They only make diagnosis harder.

A simple rule helps here. Do not ask only whether the traffic is human. Ask whether the visit reflects honest interest.

Risks of Ignoring Fake Traffic

A lot of teams treat fake website hits like a reporting nuisance. They shouldn't.

Bad traffic changes budgets, SEO decisions, and security posture. Once it gets into your reporting stack, it starts steering the business.

Financial damage arrives first

The fastest hit is wasted spend.

If paid campaigns send visitors into a site already polluted by invalid traffic, attribution gets messy fast. You can end up increasing spend on channels that appear to drive volume but don't produce customers. Hosting and infrastructure costs can also rise when junk traffic chews through resources, especially on stores or content-heavy sites.

A broader trust signal shows up elsewhere on the web too. In 2023, Google blocked 170 million policy-violating reviews and removed 12 million fake business profiles, which shows how large the ecosystem of fabricated online activity has become (shapo.io on fake review statistics). The same incentive structure drives fake website hits. Inflated numbers create false credibility, false demand, and bad decisions.

If you're tempted to gloss over the issue because the traffic “looks good,” it's worth seeing how the market around buying fake traffic works. The promise is volume. The cost is corrupted decision-making.

SEO problems are less dramatic, but more expensive

Search performance rarely collapses in one obvious moment. It degrades through bad inputs.

When fake website hits distort engagement metrics, you lose the ability to judge content accurately. A weak page may look healthy because automation props up visits. A strong page may look weak because junk traffic crushes bounce behavior or dilutes time-on-site patterns. Teams then rewrite pages that didn't need rewriting and ignore pages that did.

That creates three practical SEO problems:

- Misread landing pages because engagement signals are contaminated

- Bad internal prioritization because the loudest pages aren't the most valuable ones

- Faulty testing outcomes because the traffic sample isn't trustworthy

Security risk hides behind “just analytics”

Malicious bots don't stop at inflating reports.

They scrape pricing, test forms, probe account areas, and generate noise that masks more serious abuse. Even if your main concern is SEO, repeated fake website hits can point to broader exposure. Marketing teams often notice the symptoms before security teams do because analytics goes weird before systems fail.

If a traffic spike has no matching business explanation, assume it's operationally relevant until proven otherwise.

The cost of delay

The worst trade-off is time.

Most businesses don't fix fake traffic when it starts. They fix it after months of reporting confusion, wasted optimization work, and arguments over why “traffic growth” didn't create business growth. By then, they've already made strategic decisions on bad data.

That delay is why fake website hits deserve executive attention, not just a quick filter in analytics.

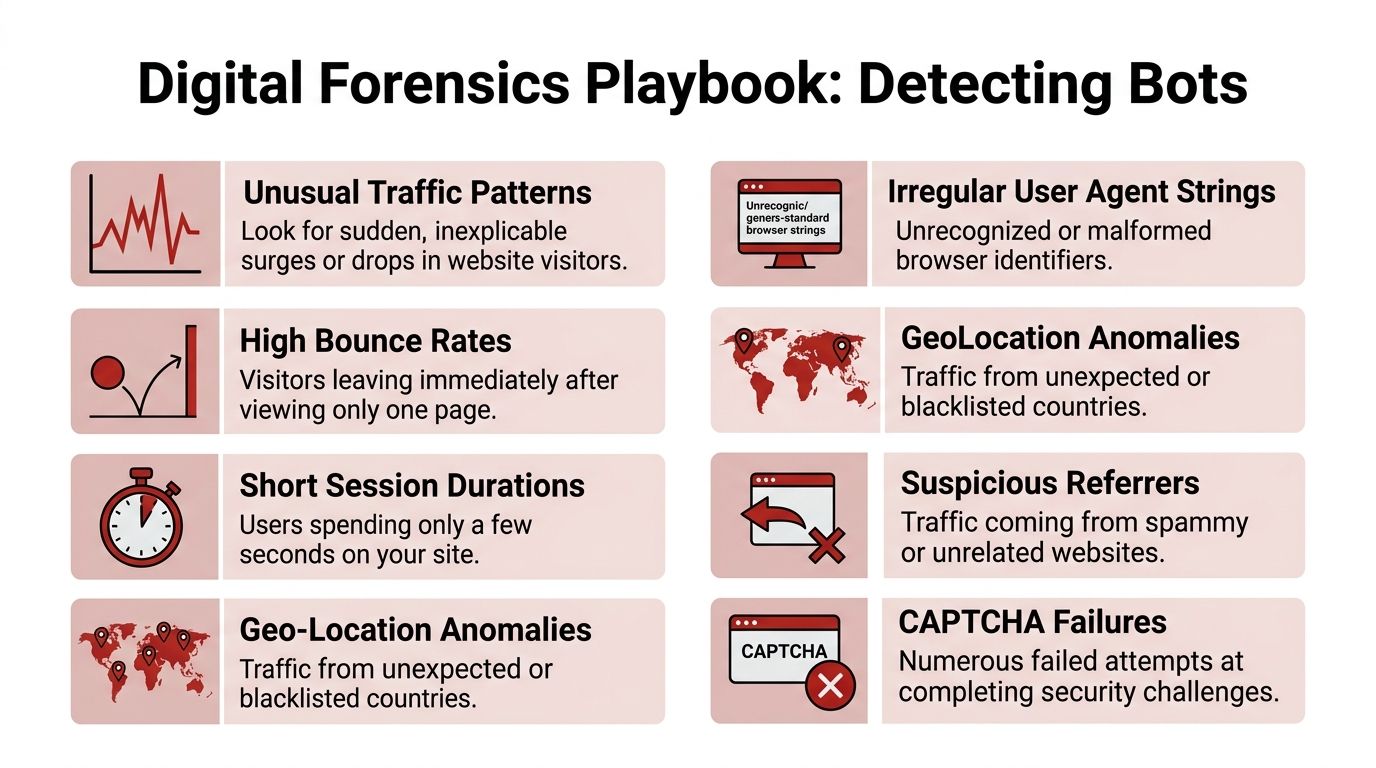

Your Digital Forensics Playbook for Detecting Bots

A reporting dashboard can show rising sessions, healthy engagement, and busy landing pages, while sales stays flat and search performance stalls. That is usually the moment teams realize they are not dealing with one traffic problem. They are dealing with three. Bad bots that should be blocked, benign bots that should be understood and filtered out of analysis, and manufactured human traffic that can look convincing enough to corrupt SEO decisions.

Detection gets easier once you stop asking, “Is this traffic fake?” and start asking, “What kind of fake, and what damage can it do?”

Start with traffic shape, not total volume

Volume is a vanity check. Traffic shape is the diagnostic check.

Real users do not distribute themselves neatly. They cluster around intent. A blog post earns discovery traffic. A service page gets fewer visits but stronger commercial signals. Brand searches hit the homepage or core category pages. Messy patterns are normal.

Suspicious traffic often looks unnaturally tidy. Deep pages attract visits while obvious entry pages stay quiet. A narrow set of URLs receives almost identical attention. Sessions arrive at regular intervals. The site appears active, but the pattern lacks human curiosity.

What to check in GA4

Review combinations of signals, not one metric in isolation:

- Sudden spikes with no business trigger. No campaign, no press mention, no ranking movement, yet sessions jump.

- Traffic concentrated on strange page groups. Low-visibility URLs get attention while navigational pages do not.

- Engagement without business movement. Events fire, session counts rise, and conversions stay unchanged.

- Geo, language, or device mixes that do not fit the market. Local businesses rarely attract unexplained bursts from irrelevant regions.

- Arrival timing that looks scheduled. Repeated sessions land in predictable patterns instead of normal daily variation.

One clue proves very little. Three clues pointing in the same direction usually justify a deeper review.

Read paths like a detective, not a dashboard user

Session paths expose motive.

A real visitor usually leaves a trail that makes sense. They land on an article from search, open a related page, check pricing, return to the original tab, and decide whether to contact you. Even weak sessions have logic.

Manufactured traffic and bot traffic often break that logic. They jump into deep URLs with no plausible entry point, click across unrelated sections, or trigger engaged-session signals without any sign of evaluation. Superficially engaged traffic is more dangerous than obvious junk because it can mislead teams during reporting meetings.

The quickest behavior check looks like this:

| Signal | Real user tendency | Suspicious tendency |

|---|---|---|

| Entry path | Starts on a logical landing page | Appears deep in site structure with no discovery path |

| Navigation flow | Moves through related pages | Jumps across unrelated sections |

| Timing | Pauses, scrolls, reads | Moves too fast to consume content |

| Commercial intent | Shows selective interest | Touches many pages with no decision pattern |

Use session coherence as your advanced filter

Bounce rate used to catch a lot of junk traffic. It does not catch enough now.

Advanced bots can scroll, click, fire events, and mimic interaction. Paid click farms and manufactured human traffic can look even cleaner at first glance. The better filter is session coherence. Does the sequence of actions match a believable goal?

That is why I review traffic in layers. Bad bots usually fail the technical smell test. Benign bots often reveal themselves in user agents, crawl behavior, and repetitive patterns. Manufactured human traffic is harder. It may pass surface-level engagement checks, but it still tends to fail the intent test. The user appears active without showing any consistent reason for being there.

The behavioral examples in this YouTube breakdown of session incoherence and bot fingerprinting are useful if your sessions look human on the surface but still do not support rankings, leads, or revenue.

Field note: The fake traffic that causes the most SEO damage is rarely the obvious bot swarm. It is the traffic that looks engaged enough to survive a quick review and distort the next round of decisions.

Server logs show what analytics smooths over

Analytics platforms summarize behavior. Logs show the raw requests.

That difference matters. GA4 can make suspicious traffic look cleaner than it is because the reporting layer groups, models, and abstracts what happened. Server logs let you inspect request frequency, user agents, asset requests, status codes, and timing at a much lower level.

Focus on patterns like these:

- Rapid-fire requests that hit pages faster than a person could read or interact

- Repeated sequences across many sessions that suggest automation rather than browsing

- Malformed or unusual user agents that do not fit normal browser behavior

- Requests for endpoints or assets in combinations a real visitor would rarely trigger

This work is not glamorous, but it eliminates ambiguity.

Check IP reputation and source quality

Source analysis matters because suspicious traffic rarely comes from one static IP block anymore. Operators rotate proxies, use residential networks, route through cloud providers, and mix sources to avoid easy detection. That means IP review works best when paired with behavior review. A suspicious session from a known data center range is useful. A cluster of similar sessions from mixed infrastructure, all showing the same incoherent path pattern, is much more useful.

For teams that need outside context on suspicious infrastructure, domains, and known abuse patterns, it helps to utilize threat intelligence platforms instead of treating every anomaly as a one-off analytics glitch.

Build a weekly review routine

Detection works best as an operating habit, not a one-time cleanup. Use a short weekly review that separates traffic into three buckets. Bad bots to block. Benign bots to exclude from reporting and monitor. Manufactured human traffic to isolate and challenge harder, because it can poison SEO analysis while looking superficially legitimate.

A practical review sequence:

- Sample top landing pages and compare traffic changes with actual business outcomes.

- Inspect session paths for coherence, not just event counts.

- Review geographies, referrers, and devices against your real market.

- Pull raw logs when analytics patterns look too uniform or too sudden.

- Tag repeat patterns by traffic type so filtering and mitigation stay precise.

Perfect certainty is not the goal. Clean enough data to protect strategy is the goal.

The best setup is boring. It runs every week. Review anomalies. Update blocklists and challenge rules. Check whether new landing pages are attracting suspicious patterns. Reconcile analytics with CRM, sales activity, and form quality, If the numbers in marketing don't match what the business is feeling, assume the cleanup job isn't finished. Fake website hits are persistent because the incentives behind them are persistent. Prevention works best when it becomes operational discipline, not emergency cleanup.

How to Mitigate and Prevent Fake Website Hits

Detection without action just gives you better bad news.

Once you've identified fake website hits, the goal is to keep them out of reporting where possible, challenge them before they touch key pages, and reduce the damage from anything that still gets through. The right setup isn't one tool. It's a stack.

First fix the data you rely on

Start with measurement hygiene.

If known junk sources keep entering GA4, your reports stay contaminated even after you understand the problem. Exclude obvious internal noise, referral spam, and known bad patterns where your setup allows it. Segment suspicious traffic instead of deleting everything blindly. You want cleaner reporting, not a false sense of cleanliness.

A practical sequence looks like this:

- Create comparison views or segments for suspicious geos, devices, referrers, and landing pages

- Isolate anomalies before broad filtering so you can confirm the pattern

- Document exclusions because undocumented filters create future confusion

- Recheck conversion paths after cleanup to see which pages influence revenue

Put barriers in front of forms and money pages

Not every page needs the same level of defense.

Contact forms, login areas, checkout steps, and lead magnets deserve stricter controls than a basic blog post. If abuse concentrates around form submissions or fake signups, add friction where intent matters most. CAPTCHA is still useful in the right places, especially when paired with honeypot fields and behavior checks.

The mistake is overusing friction everywhere. That hurts real users.

A better model is selective pressure:

| Area | Protection level | Reason |

|---|---|---|

| Blog and informational pages | Light screening | Preserve crawlability and user experience |

| Contact and signup forms | Moderate challenge | Reduce spam and fake submissions |

| Login and account flows | Strong challenge | Protect against repeated abuse attempts |

| Checkout or payment steps | Tight monitoring and challenge | Limit fraud and operational damage |

Use edge protection, not just page-level patches

If fake website hits are frequent, push mitigation outward.

CDNs and web application firewalls can challenge traffic before it reaches your origin. Tools like Cloudflare, Imperva, and Akamai are commonly used for this because they can combine rate limiting, bot scoring, and reputation data at the edge. That doesn't eliminate all bad traffic, but it reduces the load on your application and keeps more junk out of your analytics and forms.

Many small businesses underinvest in this area. They patch individual symptoms instead of controlling entry conditions.

Detect incoherent sessions, not just obvious bots

Modern bots can imitate scrolling and clicking. Blocking only by user agent or simple rule sets won't catch enough of them. The stronger approach is to combine technical and behavioral signals. The anti-fraud framework discussed in the earlier video source is useful here because advanced AI bots often fail on session incoherence, such as moving faster than a human can read or jumping to deep URLs with no sensible entry sequence. That kind of inconsistency is often more reliable than surface-level engagement.

The question isn't whether a session looked active. The question is whether the activity made sense.

Session replay tools, event timing, path analysis, and fingerprinting all help here. You don't need to spy on every user. You need to identify patterns that no real prospect would produce repeatedly.

Don't block your way into blindness

Overblocking is a real risk.

I've seen teams tighten rules so aggressively that they suppress useful crawlers, international buyers, VPN users, or legitimate repeat visitors. That's why mitigation should follow a sequence of observe, segment, challenge, then block. Blocking should be the last step when confidence is high.

Keep these trade-offs in view:

- Strict controls reduce fraud, but they can also create friction for real visitors.

- Loose controls preserve usability, but they let more junk into reporting.

- One-time cleanup helps, but bot traffic changes and needs ongoing review.

Build a maintenance rhythm

The best setup is boring. It runs every week.

Review anomalies. Update blocklists and challenge rules. Check whether new landing pages are attracting suspicious patterns. Reconcile analytics with CRM, sales activity, and form quality. If the numbers in marketing don't match what the business is feeling, assume the cleanup job isn't finished.

Fake website hits are persistent because the incentives behind them are persistent. Prevention works best when it becomes operational discipline, not emergency cleanup.

The Safe Alternative Genuine User Engagement

A traffic graph can rise while the business stays flat.

That usually means one of three things is happening. Bad bots are inflating sessions. Benign bots are being counted in places they should have been filtered out. Manufactured human traffic is adding motion without adding intent. If you treat those three sources as one problem, you make bad decisions with more confidence.

Traffic fraud isn't limited to bots hitting your site directly. Clone sites, fake referrals, paid click farms, and low-intent visit schemes can all create activity that looks acceptable in a dashboard and still poisons your read on SEO performance.

Quality beats quantity in SEO signals

Search engines do not reward raw volume in isolation. They reward patterns that line up with real search behavior and useful pages.

That distinction matters. A real visit usually has context. The query makes sense. The landing page matches the promise. The next action fits the page's job, whether that means reading, comparing, clicking deeper, or leaving after getting a quick answer. Those sessions teach you something.

Use this framework:

- Bad bots distort analytics, waste resources, and trigger false wins

- Benign bots support crawling, monitoring, and site infrastructure, but still need to be classified correctly

- Manufactured human traffic can look cleaner than bot traffic while still giving you useless feedback

- Authentic engagement creates signals you can use for SEO, content, and conversion decisions

The long-term answer to fake website hits isn't only stronger filtering. It's building a traffic strategy that rewards honest behavior.

Human traffic can still be junk

I see this mistake all the time in SEO audits. A team filters obvious bot traffic, sees cleaner reports, and assumes the remaining sessions are good enough to guide strategy.

They aren't, unless the visit carries intent.

If someone lands on the page with no real problem to solve, no fit with the query, and no reason to continue, the session may pass a fraud check while still misleading your team. That kind of traffic can inflate CTR tests, distort engagement benchmarks, and send conversion work in the wrong direction. Stronger website conversion optimization only works when the visitors reflect genuine demand.

A simple standard helps. Keep asking whether you would trust a group of these sessions to influence rankings, content priorities, or page changes. If the answer is no, they belong in the same caution bucket as fake hits.

What a safer alternative looks like

The safer option is traffic built around genuine discovery and believable behavior. That means attracting visitors who search, choose, consume, and respond like actual prospects. It also means refusing shortcuts that create surface-level engagement with no strategic value.

That is why some teams choose organic traffic from real users instead of automation, bulk hits, or proxy-based schemes. The true standard is simple. Use traffic sources that help you measure truth, not traffic sources that manufacture comfort.

Authenticity is not a branding line in SEO. It is quality control for your decision-making.

Teams that operate this way stop chasing sessions for their own sake. They judge traffic by whether it sharpens ranking insight, improves page decisions, and contributes to revenue. That standard is stricter, but it is the only one that holds up over time.

From Vanity Metrics to Real Results

Fake website hits are dangerous because they make weak performance look acceptable.

Once that happens, teams optimize the wrong pages, trust the wrong channels, and defend decisions they should have questioned earlier. Cleaning up traffic isn't just about blocking junk. It's about restoring the link between what analytics says and what the business experiences.

That work doesn't end after one audit. It becomes routine. Review anomalies. Compare traffic with leads and sales. Inspect sessions, not just summaries. Challenge suspicious patterns before they enter your reporting logic.

If you're serious about growth, pair traffic cleanup with stronger website conversion optimization so better data leads to better page decisions, not just prettier dashboards.

The businesses that win here aren't the ones with the biggest traffic charts. They're the ones with the cleanest understanding of visitor quality.

In a web full of bots, clone sites, junk visits, and manufactured noise, authenticity is a competitive advantage. Protect it like one.

If you're trying to improve rankings without poisoning your analytics with fake website hits, ClickSEO offers a safer path. It focuses on real organic clicks and human search behavior instead of bot traffic, helping you strengthen CTR and engagement signals with a risk-aware approach.